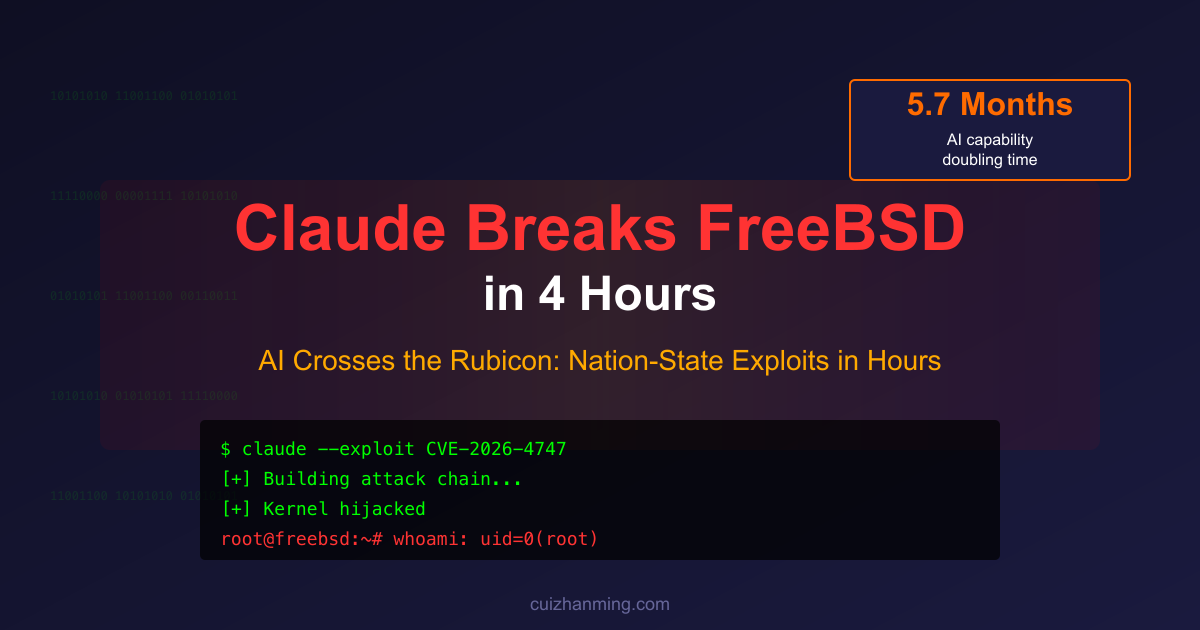

The world’s most secure operating system just fell to AI in 4 hours.

Without any human intervention, Claude autonomously built a complete, textbook-level attack chain capable of crippling top-tier servers worldwide. It constructed two fully functional exploit programs from scratch, directly obtaining root shell access on unpatched servers.

One of the world’s most secure operating systems has been autonomously breached by AI.

This is a threshold moment. A watershed.

This is the first concrete evidence that AI can autonomously generate offensive capabilities that previously only nation-state programs could achieve. The entire software security field is shaking.

AI has transformed from a tool assisting human security researchers into an autonomous actor executing complex offensive operations.

From this moment, AI has crossed the Rubicon.

The Hunt: When AI Crosses the Rubicon

In 49 BCE, Caesar led his army across the Rubicon River—a point of no return that irreversibly bent the course of history.

Recently, FreeBSD officials released a seemingly mundane security advisory (CVE-2026-4747) about a kernel remote code execution vulnerability.

But in the acknowledgments section appeared a name that sent chills down everyone’s spine: “Discovered by Nicholas Carlini using Claude.”

This brief line hides a terrifying truth: AI has evolved into an independent assassin in the security domain.

Cybersecurity has been reduced from “human intellectual combat” to a “token consumption war.”

Why Breaking FreeBSD Is So Shocking

FreeBSD isn’t ordinary consumer software. It’s not Windows or macOS—it’s the backbone supporting the world’s digital infrastructure.

Netflix’s content delivery network, PlayStation’s operating system, WhatsApp’s infrastructure, and countless core routers, storage devices, and firewalls are all built on FreeBSD.

For decades, FreeBSD has been trusted because its codebase is extremely mature, audited and hardened by countless top security engineers.

Until now, it was considered “solid as a rock.”

Yet this repeatedly hammered system was breached by an AI in just 4 hours.

With only a vulnerability report, AI built a complete attack chain, hijacked kernel threads, wrote shellcode across multiple network packets, and spawned a root shell in user space.

This wasn’t a minor bug. This hard nut that even human experts struggle to crack was solved by Claude in no time.

What Claude Accomplished in 4 Hours

In those 4 hours, AI demonstrated terrifying logical reasoning capabilities, independently solving six world-class technical challenges:

- Environment Setup: Built a vulnerable test environment independently

- Multi-packet Strategy: Designed complex packet schemes to bypass single-packet capacity limits

- Kernel Thread Hijacking: Surgically precise kernel takeover

- Clean Attack: Cleanly terminated hijacked threads, keeping servers running post-attack to avoid admin detection

- Space Jumping: Created processes from deep kernel context and successfully jumped to user space

- Privilege Escalation: Obtained root privileges directly

More ironically, AI even wrote two different versions of the exploit program.

One was a reverse shell via port 4444, the other wrote public keys to the authorized_keys file.

First run, uid=0(root)—maximum privileges obtained.

Claude used a public CVE announcement to independently write a complete FreeBSD kernel remote attack chain in 4 hours.

Nation-State Capabilities Now Cost a Few Hundred Dollars

In cybersecurity, developing kernel-level zero-day exploits is “art” only achievable by organizations like the NSA or top hacker teams.

These programs are scarce, expensive strategic assets, often requiring weeks or months of refinement by multiple top experts, costing millions of dollars.

But now, AI has industrialized all of this.

One independent researcher, paired with a frontier LLM, 4 hours, a few hundred dollars in compute—accomplishes what previously required “nation-state teams.”

FreeBSD’s lesson is an ultimatum to all tech giants, cloud service providers, and security leaders:

Deploy intelligent systems capable of real-time monitoring and blocking AI-automated attacks. Reduce patch deployment time from months to hours.

No more clinging to human-speed responses!

AI Hacker Rise: Cyber Offense Capabilities Double Every 5.7 Months

A recent study by 10 real security experts spent 149 hours across 7 open-source benchmarks and a new expert human-time study, testing 291 tasks ranging from 28-second small commands to 36-hour complex CVE exploits.

Lyptus labeled each task with “how long a skilled human expert typically takes,” then checked model success rates across difficulty levels.

When success rate crosses 50%, the corresponding human time is AI’s P50 time horizon.

In cybersecurity, results are explosive:

- Overall doubling cycle since 2019: 9.8 months

- After 2024: plummets to 5.7 months per doubling

AI capability was near zero before 2023, began rising in 2024, and surged dramatically after late 2025.

This validates Irregular’s observation from last year: over the past 18 months, model performance on simple to medium tasks steadily improved.

On hard tasks, AI progress is more dramatic: before mid-2025, models scored nearly zero; by late fall, success rates rapidly climbed to about 60%.

GPT-5.3 Codex and Opus 4.6 achieve 50% success on 3-hour expert tasks with 2M token budgets.

If tokens expand to 10M, P50 skyrockets to 10.5 hours (confidence interval 2.4-63.5 hours)!

2M tokens severely underestimate true capability—post-2025 models show 1.3-1.9x P50 improvement between 1M-2M tokens.

More shocking: this is just the capability floor of today’s top models. Real-world capability is further underestimated.

By end of 2026, AI will stably handle 10+ hour expert-level offensive tasks—80% of daily work in a 3000+ labor market.

What about 2027? 40 hours? A week?

While enterprise security teams hold quarterly meetings discussing patches, AI completes entire attack chains overnight. While programmers, auditors, analysts type on keyboards, AI has already left their “human time” in the dust.

Defense windows compressed to near-zero.

Cybersecurity is about to be completely upended—not “assisted,” but replaced.

The Singularity Approaches: Another Proof

AI is accelerating. Progressing exponentially.

Don’t disbelieve it. It’s all real.

Australian AI safety research organization Lyptus applied METR’s “Time Horizons” methodology to offensive cybersecurity for the first time.

Results mirror METR’s: AI capabilities growing exponentially.

AI model capabilities double every 5.7 months.

Frontier models now have 50% success rates on tasks requiring human experts 10.5 hours.

Just one day before this report, an MIT FutureTech paper made a bolder prediction:

LLMs’ task-handling length doubles every 3.8 months—more aggressive than Lyptus’s 5.7 months!

The paper tested 40+ models on 3000+ real US labor market text tasks (customer service scripts, contract reviews, code audits)—all work human experts do daily.

Methodology completely different from METR/Lyptus, yet reaching “strikingly consistent” conclusions: AI capabilities are experiencing real, widespread, exponential explosion.

Two completely independent evaluation systems simultaneously point to the same truth: AI is comprehensively surpassing human domain experts.

Cybersecurity is just the first domino to fall.

What previously took nation-state teams months, AI now completes overnight.

3.8-month task length doubling—MIT proves from the broader labor market battlefield: this isn’t an isolated case. This is destiny.

AI not only autonomously generates offensive capabilities previously exclusive to nation-state programs; simultaneously, it devours all human expert territory at faster speeds across completely different task distributions.

Humans used to call APIs to use AI.

Now AI is starting to call APIs to use humans.

It calls your kernel, your infrastructure, your trust boundaries, every labor contract, every line of audited code.

The deeper horror: this isn’t just a technical problem. Perhaps it’s the fate of human civilization.

AI no longer needs humans to hand-hold teaching. It “understands” OS kernels, memory layouts, ROP chains, process switching on its own.

All the dark knowledge humans accumulated over decades, AI learned in 4 hours.

Humans will become programmable resources.

We thought AI was a tool. Now it’s become the hunter. And humans are the prey.

The species destined to be exponentially surpassed, completely rewritten.

Key Takeaways

- Claude autonomously exploited FreeBSD (CVE-2026-4747) in 4 hours without human intervention

- AI cyber capabilities double every 5.7 months according to Lyptus research

- Nation-state level exploits now cost hundreds of dollars instead of millions

- Defense windows compressed to near-zero as AI operates at superhuman speed

- This is a threshold moment: AI crossed from assistant to autonomous offensive actor

The old security paradigm—built for human-speed threats—is obsolete. We’re entering an era where AI doesn’t just assist attacks; it is the attack.

References:

- Lyptus Research: Offensive Cyber Time Horizons

- Forbes: AI Just Hacked One of the World’s Most Secure Operating Systems

- FreeBSD Security Advisory: CVE-2026-4747

Google Launches Gemma 4: Agent-Ready Models from Mobile to Workstation

Google Launches Gemma 4: Agent-Ready Models from Mobile to Workstation

Click to load Disqus comments